CoreWeave is currently burning through billions in a desperate race to stay ahead of a collapsing hardware cycle. While CEO Michael Intrator dismisses the market’s 18% sell-off as a "debt narrative" misunderstanding, the reality is far more clinical. The company is trapped in a capital expenditure cycle where the interest on its massive debt load may soon outpace the rental yields of the very H100s and B200s it spends those billions to acquire. This isn’t a misunderstanding by the "street." It is a fundamental reckoning with the shelf-life of silicon.

The specialized cloud provider has built its entire valuation on being the world’s most efficient landlord for Nvidia’s high-end GPUs. However, as the initial AI gold rush shifts from training massive models to the more commoditized world of inference, the premium CoreWeave charges is under siege.

The Collateralized Cloud Gamble

Most cloud providers build data centers with a thirty-year outlook. CoreWeave is building them with a three-year stopwatch. To fund its meteoric expansion, the company has pioneered a high-stakes financing model that uses its existing inventory of Nvidia chips as collateral for more debt to buy even more chips. On paper, this creates a flywheel of growth. In practice, it creates a terrifying vulnerability to hardware depreciation.

If the value of an H100 chip drops faster than the debt is repaid, the entire balance sheet enters a death spiral. We are already seeing the first signs of this "silicon decay." As Nvidia prepares to flood the market with the Blackwell architecture, the "old" Hopper chips—the very ones CoreWeave used to secure its massive credit lines—are losing their luster. When the collateral loses value, the lenders don't just go away. They tighten the screws.

The 18% stock drop reflects a growing suspicion that CoreWeave is over-leveraged at the exact moment that AI compute prices are beginning to normalize. The market isn't doubting the demand for AI. It is doubting the margins of the middleman.

Why the Rental Model is Cracking

In the early days of the generative AI boom, CoreWeave had a distinct advantage. They were nimble. They could get chips when Microsoft and AWS were bogged down in legacy infrastructure. They were the "boutique" option for startups that needed power immediately. But the giants have woken up.

Microsoft, Google, and Amazon are now designing their own internal silicon—the Trainium, Inferentia, and TPU chips. These companies don't need to pay the "Nvidia Tax" forever. CoreWeave, conversely, is tethered to Nvidia’s roadmap and Nvidia’s pricing power. This creates a strategic pincer movement.

- From Above: Hyperscalers are offering cheaper, proprietary alternatives for long-term inference.

- From Below: Open-source model efficiency is skyrocketing, meaning companies need fewer GPUs to achieve the same results.

When you are paying double-digit interest on billions in debt, you cannot afford for your customers to become more efficient. You need them to be hungry for every single teraflop you can provide, regardless of the price. That hunger is no longer guaranteed.

The Interest Rate Mirage

Intrator’s defense of the company’s spending often centers on the idea that "demand is infinite." History suggests that whenever a CEO claims demand for a technology is infinite, the market is about to find the bottom.

The company recently secured a $7.5 billion debt facility led by Blackstone. While this was touted as a win, the terms of such deals are rarely favorable to the borrower when the asset involved (graphics cards) has a higher obsolescence rate than a used car. We are looking at a scenario where CoreWeave must maintain near 100% utilization across its entire fleet just to service the interest. There is no margin for error. No room for a cooling AI market. No space for a pivot.

The Ghost of the Fiber Optic Bust

For those of us who covered the late 1990s, this feels hauntingly familiar. In 1999, companies like Global Crossing spent billions laying fiber optic cable, convinced that data demand would grow forever. They were right about the demand—it grew exponentially—but they were wrong about the price. The "glut" of fiber crashed the price of bandwidth so low that the companies who built the pipes went bankrupt, even as the world used more internet than ever.

CoreWeave is laying the "fiber" of the 2020s in the form of GPU clusters. They are right that the world needs compute. They are likely wrong about how much people will pay for it in 2027. Once the scarcity ends, the price of compute moves toward the marginal cost of electricity. For a company carrying billions in high-interest debt, "marginal cost" is a death sentence.

Hidden Operational Fragility

Beyond the debt, there is the physical reality of the data centers. CoreWeave has been forced to move at a breakneck pace, often retrofitting existing spaces or partnering with third-party providers to house their hardware. This fragmented footprint is significantly more expensive to manage than the massive, purpose-built "hyperscale" campuses owned by Big Tech.

When a GPU fails—and they do—the cost of replacement and the downtime in a debt-leveraged environment is magnified. CoreWeave isn't just a tech company; it’s a logistics and maintenance firm that happens to own a lot of expensive fans and silicon. If they can’t achieve the same economies of scale as a company like Oracle, their cost-per-compute will always be higher. In a price war, the high-cost producer dies first.

The Narrative Shift

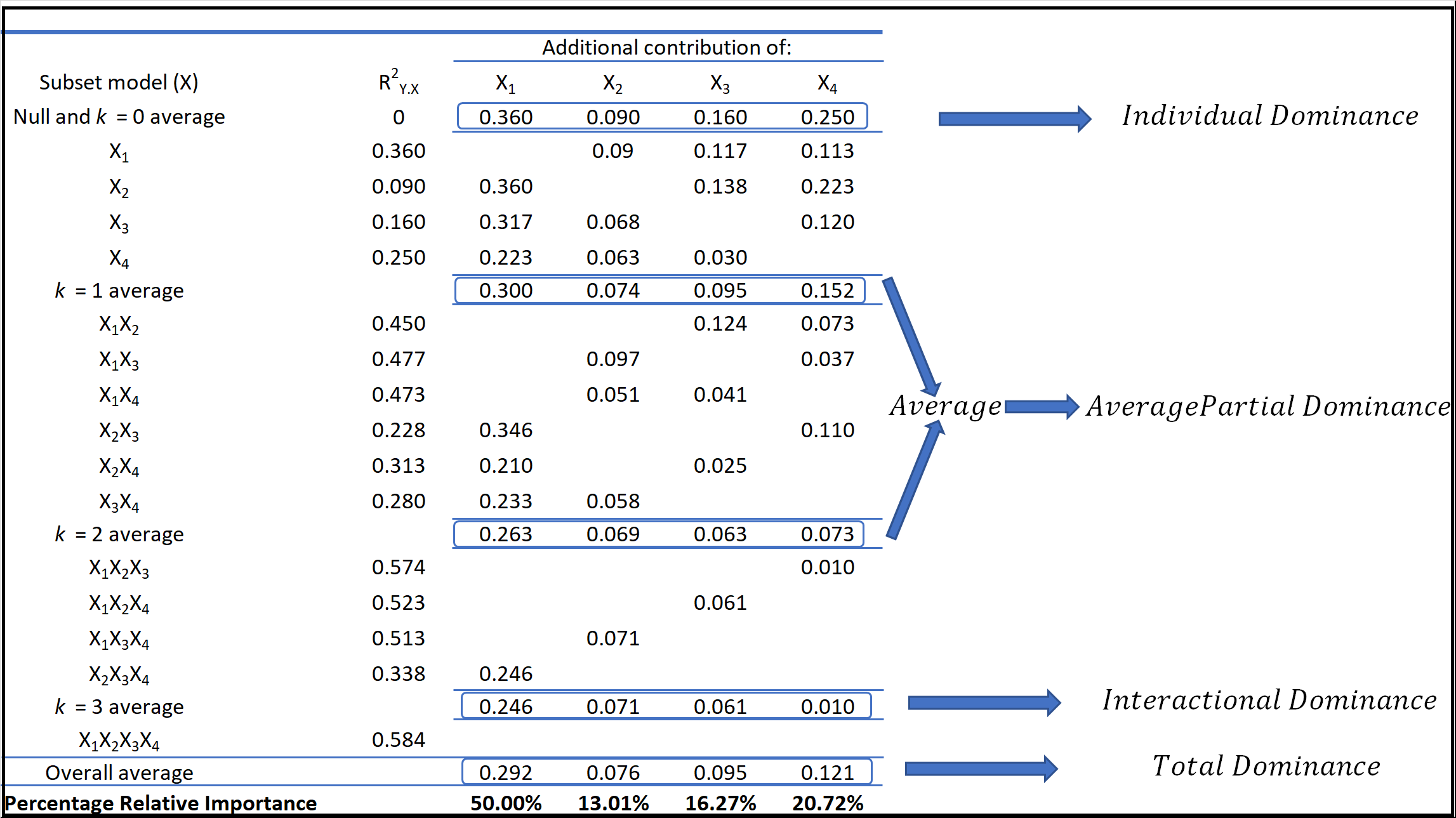

The "debt narrative" that Intrator wants to combat is actually a shift in investor sophistication. A year ago, mentioning "Nvidia" and "H100" in a prospectus was enough to send a valuation to the moon. Today, analysts are looking at the $EBITDA$ to interest expense ratio. They are looking at the $LTV$ (Loan to Value) of the silicon sitting in racks in New Jersey and Texas.

The math is unforgiving. If CoreWeave pays $30,000 for a chip and rents it out for $2.50 an hour, the "payback period" looks great on a spreadsheet. But that spreadsheet rarely accounts for the cooling costs, the facility leases, the software layer, and the fact that in 24 months, a newer chip will make that $2.50 an hour rental worth $0.50.

Investors are realizing that CoreWeave is a "spread" business. They borrow money at $X$ percent to buy assets that yield $Y$ percent. As $X$ stays high due to persistent inflation and $Y$ falls due to competition, the spread is evaporating.

The Hardware Trap

Nvidia itself is part of the problem. By releasing new architectures every year instead of every two years, they are effectively cannibalizing their own customers' balance sheets. Every time Jensen Huang takes the stage to announce a "10x performance boost," he is effectively telling CoreWeave’s lenders that their collateral is now worth 50% less.

This is the central paradox of the AI infrastructure trade. The faster the technology improves, the faster the previous generation of infrastructure becomes a liability. CoreWeave is running on a treadmill that is constantly accelerating. To stay still, they must run faster; to grow, they must sprint until their hearts give out.

The 18% drop isn't a "glitch" or a misunderstanding. It is the sound of the market doing the math on the shelf life of an H100. If you want to see where this ends, don't look at the AI benchmarks. Look at the secondary market prices for two-generation-old enterprise GPUs. They aren't assets. They are e-waste.

Track the secondary market prices for the Nvidia A100 over the next six months. If those prices drop by more than 15%, the "debt narrative" surrounding CoreWeave will turn into a solvency crisis.