The era of the gentleman’s agreement between Washington and Big Tech has officially ended. For the past eighteen months, the relationship between the federal government and leading AI laboratories was defined by voluntary commitments—a series of polite nods and vague promises made by CEOs in the Oval Office. Those handshakes have now curdled into a high-stakes standoff. As the U.S. government drafts a new, aggressive framework for AI oversight, the friction is no longer just about safety. It is about power. Specifically, it is about who holds the kill switch for the most advanced computational models ever built.

While much of the public discourse focuses on the existential risks of "superintelligence," the immediate conflict centers on a more pragmatic reality: the Department of Commerce and the National Security Council are moving to treat high-end AI as a strategic asset, much like enriched uranium or advanced semiconductors. This shift has placed firms like Anthropic and OpenAI in a defensive crouch. These companies, once the darlings of the administration’s push for innovation, now find themselves under a microscope that tracks everything from their compute clusters to the specific weights of their latest models.

The End of Voluntary Compliance

Washington has realized that asking for cooperation is a failing strategy. The new guidelines currently circulating through the halls of the Eisenhower Executive Office Building are designed to codify what was previously optional. We are seeing a transition from "trust us" to "show us."

The primary driver for this sudden hardening of the state’s position is the realization that dual-use AI models—those capable of both coding a website and engineering a biological pathogen—cannot be governed by the honor system. The government’s new stance insists on rigorous, third-party audits before a model is even released to the public. For a company like Anthropic, which has built its entire brand on the concept of "Constitutional AI" and safety, this is a bitter pill. They argue that their internal safety protocols are already more sophisticated than anything a government agency could devise.

Washington disagrees.

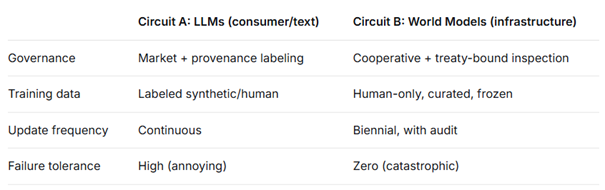

The tension stems from a fundamental mismatch in speed. Silicon Valley moves in "sprints," deploying updates every few weeks. The federal bureaucracy moves in fiscal years. By imposing a mandatory waiting period for safety certifications, the government is effectively putting a governor on the engine of American innovation. This isn't just a regulatory hurdle; it is a fundamental shift in the business model of the AI sector.

The Anthropic Friction Point

Anthropic occupies a unique space in this drama. Founded by defectors from OpenAI who were concerned about the commercialization of the technology, the company was supposed to be the "safe" alternative. However, the very expertise that makes Anthropic leaders in safety also makes them the most resistant to government interference. They know where the bodies are buried because they helped dig the graves.

Sources close to the negotiations suggest that Anthropic’s leadership views the new guidelines as a form of intellectual property theft by proxy. To prove a model is safe, a company must reveal the "recipe"—the specific training data, the reinforcement learning parameters, and the architectural nuances that provide a competitive edge. There is a deep-seated fear that once this information enters the government’s orbit, it will inevitably leak to competitors or, worse, state actors.

The friction reached a boiling point during discussions over "red teaming." The government wants the power to appoint its own experts to probe these models for weaknesses. Anthropic and its peers argue that the pool of people capable of red-teaming a model like Claude 3 or GPT-4 is incredibly small, and most of them already work for the labs. Bringing in external, government-vetted contractors is, in the eyes of the industry, a recipe for mediocrity and false security.

The Compute Threshold as a Weapon

The most significant weapon in the government’s new arsenal is the use of computational power as a regulatory trigger. The Department of Commerce is moving toward a system where any training run exceeding a specific floating-point operations (FLOPs) threshold must be reported immediately.

This is a blunt instrument. It assumes that more compute equals more danger. While there is a correlation, it is not a perfect science. We are seeing a trend toward "small-but-mighty" models that use high-quality data to outperform massive, compute-heavy giants. By focusing on the hardware rather than the output, the government risks missing the most dangerous innovations while strangling the most efficient ones.

Consider the following hypothetical scenario. A startup develops a breakthrough in algorithmic efficiency that allows them to train a GPT-4 class model on a fraction of the hardware currently required. Under the current "threshold" thinking, that model might fly under the radar of federal oversight entirely, despite possessing the same capabilities that would trigger an investigation into a larger firm. This loophole highlights the desperation of a regulatory body trying to catch a lightning bolt in a butterfly net.

The National Security Nexus

We cannot discuss these guidelines without discussing Beijing. The move to tighten domestic AI rules is inextricably linked to the broader trade war over semiconductors. The U.S. government is terrified that an "open" American model could be downloaded, fine-tuned, and weaponized by the People's Liberation Army.

This has led to a radical proposal within the new guidelines: the "Know Your Customer" (KYC) requirement for cloud providers. Under this rule, companies like Amazon, Microsoft, and Google would be responsible for ensuring that their AI chips aren't being used by foreign entities to train restricted models.

- The Burden of Proof: Cloud providers must now act as an extension of the intelligence community.

- The Cost of Compliance: Smaller AI labs may find themselves priced out of the market due to the administrative overhead required to satisfy these KYC rules.

- The Global Split: If the U.S. makes its environment too restrictive, we may see a "brain drain" to jurisdictions with more permissive laws, such as the UAE or even parts of Europe that are prioritizing growth over absolute safety.

This isn't just about spreadsheets and server racks. It is a battle for the soul of the internet. If the U.S. successfully treats AI as a controlled substance, the dream of a decentralized, open-source AI future dies. We move toward a world of "vetted" intelligence, where only a few massive corporations have the capital and the political connections to stay in the game.

The Ghost of the Microsoft Antitrust Trial

The current climate feels eerily similar to the late 1990s when the Department of Justice went after Microsoft. Back then, the issue was browser bundling. Today, it is the fundamental infrastructure of thought. The tech giants learned a lesson from the 90s: don't fight the government; become the government.

We are seeing an unprecedented level of "revolving door" activity between AI labs and the agencies tasked with regulating them. This creates a cozy ecosystem that might actually favor the incumbents. If Anthropic and OpenAI help write the rules, they can ensure those rules are just expensive enough to prevent a garage startup from ever competing with them. This is the "moat" strategy disguised as public safety.

The tension with Anthropic specifically arises because they are trying to maintain a shred of independence. They are resisting the "capture" that usually happens in these cycles. But in Washington, if you aren't at the table, you're on the menu. The administration's move to create strict, enforceable guidelines is a clear signal that the time for "collaboration" is over. The state is asserting its dominance over the silicon.

The Technical Reality of Enforcement

Even if these guidelines are signed into law tomorrow, the question of enforcement remains a technical nightmare. How does a government auditor verify that a model hasn't been "jailbroken" by its own creators?

The math behind these models is a "black box" even to the people who build them. We can observe the inputs and the outputs, but the internal logic—the millions of weights and biases—is not something that can be easily "read" like a piece of legal code. The government is essentially trying to regulate a system it does not fully understand, using tools designed for a previous century.

To truly oversee AI, the government would need a dedicated agency with a technical talent pool that rivals the private sector. Currently, the salary gap between a senior AI researcher at a top lab and a government scientist is a factor of ten. Unless the state can attract the best minds, these guidelines will be nothing more than theater—a set of rules that look good on paper but are easily bypassed by anyone with enough GPU time and a clever prompt.

The push for these guidelines is a recognition that the "wild west" era of AI is over. The stakes are too high for the government to remain on the sidelines. But by stepping onto the field so aggressively, they risk breaking the very machine that gives the United States its competitive edge. The friction with Anthropic is just the first tremor of a much larger earthquake.

The Export Control Trap

One of the most controversial elements of the new framework is the potential for export controls on model weights. This would treat the digital files containing a trained AI's "knowledge" as a physical weapon.

This approach ignores the reality of the digital world. You cannot stop a file from being uploaded to a decentralized server or shared via encrypted channels. Once the weights are out, they are out. By focusing on export controls, the government is trying to apply a 20th-century solution to a 21st-century problem. It creates a false sense of security while imposing massive costs on American companies trying to compete in a global market.

If a researcher in Paris or Singapore can access a high-quality open-source model that is 95% as good as a restricted American model, the American company loses its market share without any actual gain in global safety. We are flirting with a scenario where the U.S. regulates itself into irrelevance in the name of a security goal that is fundamentally unachievable through traditional means.

The guidelines also suggest a "kill switch" capability for major models. This would allow the government to order the immediate shutdown of an AI system if it is found to be facilitating illegal activity or posing a national security threat. The logistics of this are terrifying. Who decides what constitutes a threat? What are the appeals processes? If an AI is integrated into a nation's healthcare or energy grid, a "kill switch" could be more dangerous than the threat it is meant to neutralize.

The industry’s pushback isn’t just about protecting profits. It’s about the practicality of operating in an environment where the rules can change with the political winds. Anthropic’s friction with the administration is a warning sign that the bridge between the technologists and the policymakers is collapsing.

The next phase of this conflict will move from the boardroom to the courtroom. We are heading toward a definitive legal showdown over the government’s right to intervene in the development of software before a crime has even been committed. This is the territory of "pre-crime" for algorithms.

If the government succeeds in imposing these strictures, it will set a precedent that extends far beyond AI. It will establish the principle that any sufficiently advanced technology is, by definition, an interest of the state, subject to the same level of control as nuclear weapons or biological agents. For an industry built on the ethos of "permissionless innovation," that is a terminal diagnosis.

The move to chain the giants is underway. Whether those chains hold—or whether they simply force the giants to relocate—will determine the geopolitical hierarchy for the next century. The friction we see today is just the sound of the links being forged.

The most immediate action for any firm operating in this space is to prepare for a "dual-track" development cycle: one for the public, and one for the regulators. This will inevitably slow down progress and increase costs, but in the eyes of Washington, that is a feature, not a bug. They want to slow the world down until they can figure out how to run it.

Start by auditing your own internal compute usage against the proposed FLOP thresholds. If you are approaching the limit, you are no longer a private company; you are a de facto arm of the state's strategic interest. Accept it, or move your servers.